langchain-core==1.4.0 langchain-core==1.4.0

Changes since langchain-core==0.3.86 chore(infra): merge v1.4 into master ( #37350 ) chore: bump urllib3 from 2.6.3 to 2.7.0 in /libs/core ( #37329 ) fix(core): avoid eager pydantic.v1 import in @depr

Changes since langchain-core==0.3.86 chore(infra): merge v1.4 into master ( #37350 ) chore: bump urllib3 from 2.6.3 to 2.7.0 in /libs/core ( #37329 ) fix(core): avoid eager pydantic.v1 import in @depr

| Date | Count |

|---|---|

| 2026-05-06 | 34 |

| 2026-05-07 | 48 |

| 2026-05-08 | 26 |

| 2026-05-09 | 14 |

| 2026-05-10 | 36 |

| 2026-05-11 | 288 |

| 2026-05-12 | 56 |

og

og EN Monthly release notes for VS Code 1.120.

EN Monthly release notes for VS Code 1.120.

og

og EN Monthly release notes for VS Code 1.119.

EN Monthly release notes for VS Code 1.119.

og

og EN Monthly release notes for VS Code 1.118.

EN Monthly release notes for VS Code 1.118.

og

og EN Monthly release notes for VS Code 1.117.

EN Monthly release notes for VS Code 1.117.

og

og EN Monthly release notes for VS Code 1.116.

EN Monthly release notes for VS Code 1.116.

og

og EN Monthly release notes for VS Code 1.115.

EN Monthly release notes for VS Code 1.115.

og

og EN Monthly release notes for VS Code 1.114.

EN Monthly release notes for VS Code 1.114.

og

og EN Monthly release notes for VS Code 1.113.

EN Monthly release notes for VS Code 1.113.

og

og EN Monthly release notes for VS Code 1.112.

EN Monthly release notes for VS Code 1.112.

og

og EN Monthly release notes for VS Code 1.111.

EN Monthly release notes for VS Code 1.111.

og

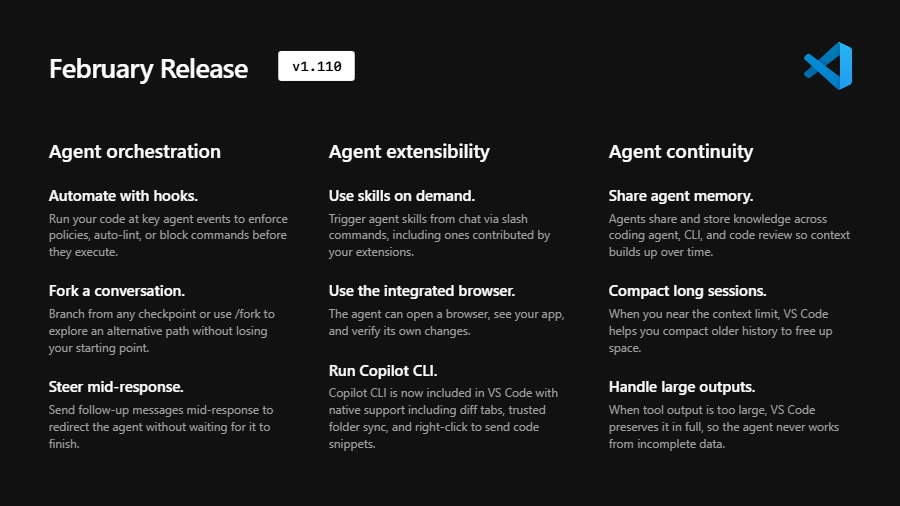

og EN Monthly release notes for VS Code 1.110.

EN Monthly release notes for VS Code 1.110.

og

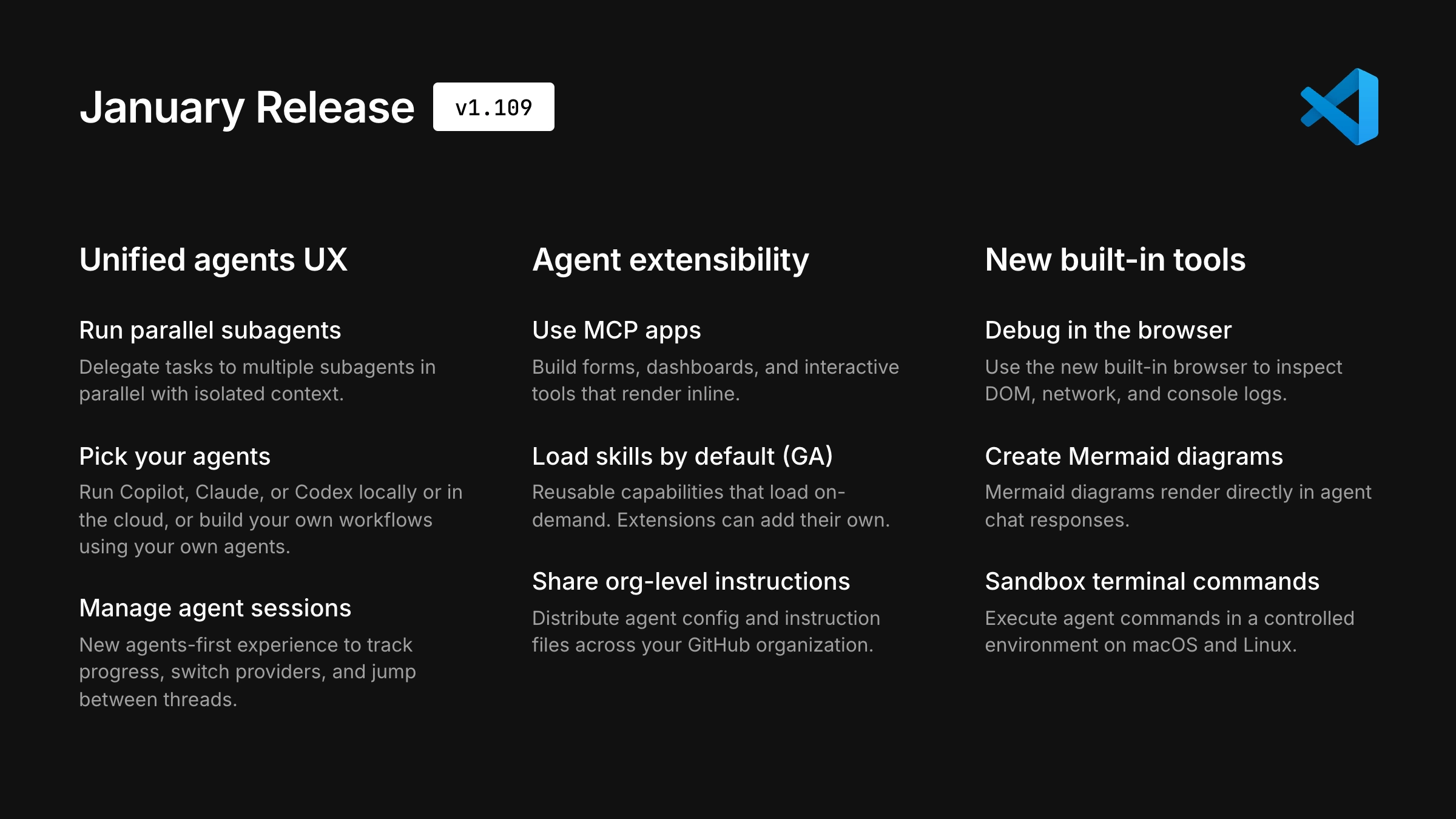

og EN Monthly release notes for VS Code 1.109.

EN Monthly release notes for VS Code 1.109.

og

og EN arXiv:2605.08314v1 Announce Type: cross Abstract: SVD-based Low-rank compression reduces transformer parameters and nominal FLOPs, but these savings often translate poorly into real LLM serving speedu

EN arXiv:2605.08314v1 Announce Type: cross Abstract: SVD-based Low-rank compression reduces transformer parameters and nominal FLOPs, but these savings often translate poorly into real LLM serving speedu

og

og EN arXiv:2605.09070v1 Announce Type: cross Abstract: Many jailbreak attack research papers report attack success rates for a limited number of parameter settings, even though there are many combinations

EN arXiv:2605.09070v1 Announce Type: cross Abstract: Many jailbreak attack research papers report attack success rates for a limited number of parameter settings, even though there are many combinations

og

og EN arXiv:2605.09228v1 Announce Type: cross Abstract: Most LLM benchmarks score how well a model responds to explicit requests. They leave unmeasured a different conversational ability: noticing and actin

EN arXiv:2605.09228v1 Announce Type: cross Abstract: Most LLM benchmarks score how well a model responds to explicit requests. They leave unmeasured a different conversational ability: noticing and actin

og

og EN arXiv:2605.10848v1 Announce Type: cross Abstract: Does a lexical retriever suffice as large language models (LLMs) become more capable in an agentic loop? This question naturally arises when building

EN arXiv:2605.10848v1 Announce Type: cross Abstract: Does a lexical retriever suffice as large language models (LLMs) become more capable in an agentic loop? This question naturally arises when building

og

og EN arXiv:2605.08200v1 Announce Type: new Abstract: A pervasive intuition holds that vision-language models (VLMs) are most trustworthy when their attention maps look sharp: concentrated attention on the

EN arXiv:2605.08200v1 Announce Type: new Abstract: A pervasive intuition holds that vision-language models (VLMs) are most trustworthy when their attention maps look sharp: concentrated attention on the

EN arXiv:2605.08220v1 Announce Type: new Abstract: The automated extraction of data from scientific charts is a critical task for large-scale literature analysis. While multimodal Large Language Models (

EN arXiv:2605.08220v1 Announce Type: new Abstract: The automated extraction of data from scientific charts is a critical task for large-scale literature analysis. While multimodal Large Language Models (

EN arXiv:2605.08354v1 Announce Type: new Abstract: Aligning multimodal generative models with human preferences demands reward signals that respect the compositional, multi-dimensional structure of human

EN arXiv:2605.08354v1 Announce Type: new Abstract: Aligning multimodal generative models with human preferences demands reward signals that respect the compositional, multi-dimensional structure of human