langchain-core==1.4.0 langchain-core==1.4.0

Changes since langchain-core==0.3.86 chore(infra): merge v1.4 into master ( #37350 ) chore: bump urllib3 from 2.6.3 to 2.7.0 in /libs/core ( #37329 ) fix(core): avoid eager pydantic.v1 import in @depr

Changes since langchain-core==0.3.86 chore(infra): merge v1.4 into master ( #37350 ) chore: bump urllib3 from 2.6.3 to 2.7.0 in /libs/core ( #37329 ) fix(core): avoid eager pydantic.v1 import in @depr

| Date | Count |

|---|---|

| 2026-05-06 | 34 |

| 2026-05-07 | 48 |

| 2026-05-08 | 26 |

| 2026-05-09 | 14 |

| 2026-05-10 | 36 |

| 2026-05-11 | 288 |

| 2026-05-12 | 56 |

og

og EN Monthly release notes for VS Code 1.120.

EN Monthly release notes for VS Code 1.120.

og

og EN Monthly release notes for VS Code 1.119.

EN Monthly release notes for VS Code 1.119.

og

og EN Monthly release notes for VS Code 1.118.

EN Monthly release notes for VS Code 1.118.

og

og EN Monthly release notes for VS Code 1.117.

EN Monthly release notes for VS Code 1.117.

og

og EN Monthly release notes for VS Code 1.116.

EN Monthly release notes for VS Code 1.116.

og

og EN Monthly release notes for VS Code 1.115.

EN Monthly release notes for VS Code 1.115.

og

og EN Monthly release notes for VS Code 1.114.

EN Monthly release notes for VS Code 1.114.

og

og EN Monthly release notes for VS Code 1.113.

EN Monthly release notes for VS Code 1.113.

og

og EN Monthly release notes for VS Code 1.112.

EN Monthly release notes for VS Code 1.112.

og

og EN Monthly release notes for VS Code 1.111.

EN Monthly release notes for VS Code 1.111.

og

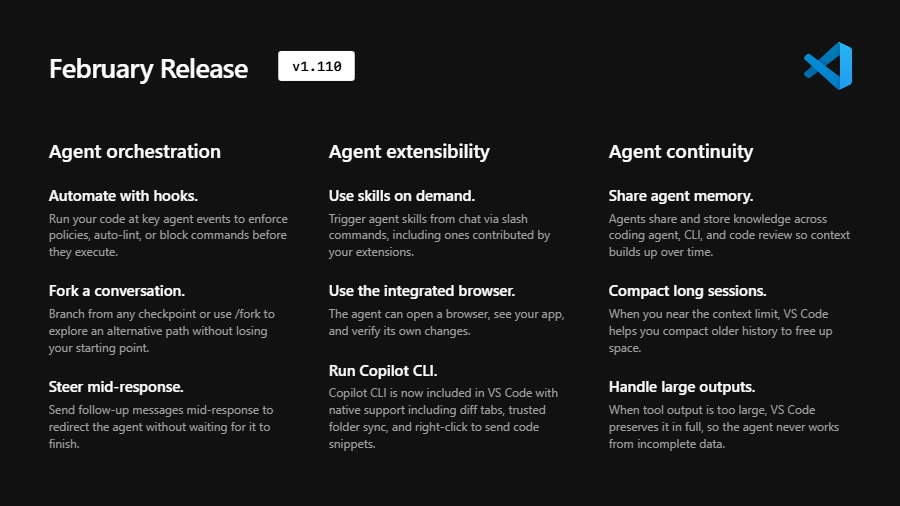

og EN Monthly release notes for VS Code 1.110.

EN Monthly release notes for VS Code 1.110.

og

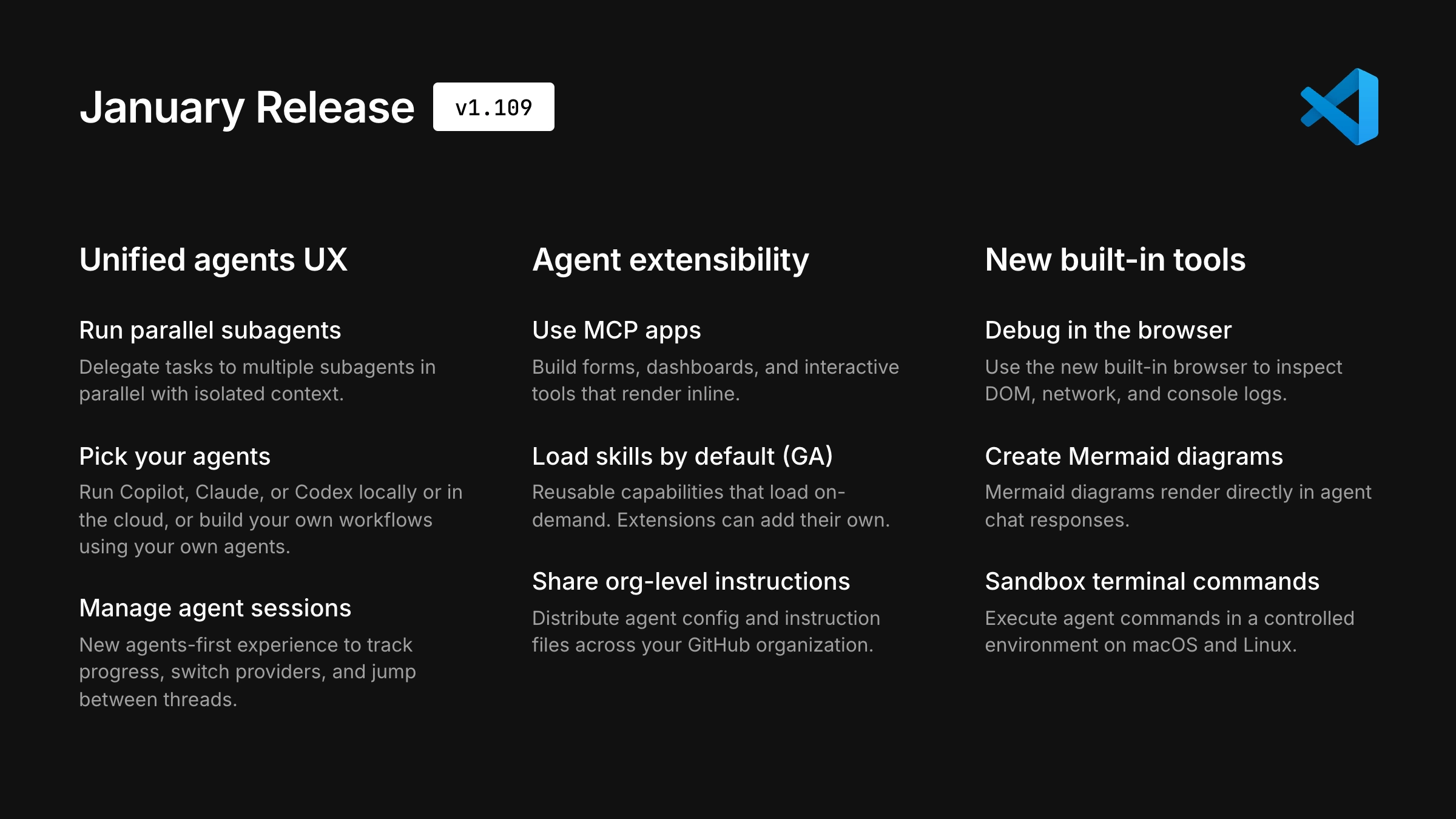

og EN Monthly release notes for VS Code 1.109.

EN Monthly release notes for VS Code 1.109.

og

og AI要約 はじめに OpenAI Codex と Claude Code を両方使っていると、単純な「どちらが賢いか」とは別に、かなり現実的な差が見えてきます。 それが コスト感 です。 ここでいうコストは、月額料金だけではありません。 どれくらい作

og

og AI要約 個人的によく使う学習効率化のプロンプトを3つ、 実際のやり取り例とあわせてメモしてみます。 1. 横展開プロンプト:「〇〇という概念と同等の重要度の概念を3つ教えて」 用途 言語・フレームワーク・アーキテクチャなど、新しい概念を一つ学んだタ

og

og AI要約 本題: VScodeで初歩的なカウントアプリを作って学習中に、次の問題に直面した。 Live Serveer機能でHTMLを記述したファイルを表示しようとしても表示されなくなってしまった。 ※最初の投稿かつ備忘録としての書きなぐりためいろい

![[備忘録-1] VScode内でHTMLをGo Liveしようとしたがうまくいかなかった。](https://qiita-user-contents.imgix.net/https%3A%2F%2Fqiita-user-contents.imgix.net%2Fhttps%253A%252F%252Fcdn.qiita.com%252Fassets%252Fpublic%252Farticle-ogp-background-afbab5eb44e0b055cce1258705637a91.png%3Fixlib%3Drb-4.1.1%26w%3D1200%26blend64%3DaHR0cHM6Ly9xaWl0YS11c2VyLXByb2ZpbGUtaW1hZ2VzLmltZ2l4Lm5ldC9odHRwcyUzQSUyRiUyRmF2YXRhcnMuZ2l0aHVidXNlcmNvbnRlbnQuY29tJTJGdSUyRjI2NDIxNzkwMCUzRnYlM0Q0P2l4bGliPXJiLTQuMS4xJmFyPTElM0ExJmZpdD1jcm9wJm1hc2s9ZWxsaXBzZSZiZz1GRkZGRkYmZm09cG5nMzImcz03YTE1OTJkMWU4MzlmNTM5MzI3NTY1NTkzZDk3MTY1ZA%26blend-x%3D120%26blend-y%3D467%26blend-w%3D82%26blend-h%3D82%26blend-mode%3Dnormal%26s%3D93ed1f865eb8f8d4528ed3975b3ac179?ixlib=rb-4.1.1&w=1200&fm=jpg&mark64=aHR0cHM6Ly9xaWl0YS11c2VyLWNvbnRlbnRzLmltZ2l4Lm5ldC9-dGV4dD9peGxpYj1yYi00LjEuMSZ3PTk2MCZoPTMyNCZ0eHQ9JTVCJUU1JTgyJTk5JUU1JUJGJTk4JUU5JThDJUIyLTElNUQlMjBWU2NvZGUlRTUlODYlODUlRTMlODElQTdIVE1MJUUzJTgyJTkyR28lMjBMaXZlJUUzJTgxJTk3JUUzJTgyJTg4JUUzJTgxJTg2JUUzJTgxJUE4JUUzJTgxJTk3JUUzJTgxJTlGJUUzJTgxJThDJUUzJTgxJTg2JUUzJTgxJUJFJUUzJTgxJThGJUUzJTgxJTg0JUUzJTgxJThCJUUzJTgxJUFBJUUzJTgxJThCJUUzJTgxJUEzJUUzJTgxJTlGJUUzJTgwJTgyJnR4dC1hbGlnbj1sZWZ0JTJDdG9wJnR4dC1jb2xvcj0lMjMxRTIxMjEmdHh0LWZvbnQ9SGlyYWdpbm8lMjBTYW5zJTIwVzYmdHh0LXNpemU9NTYmdHh0LXBhZD0wJnM9MGZjZWVkNDc4ZWNmZDMyNzg1Y2Y1NjZhODVkMjNmMWQ&mark-x=120&mark-y=112&blend64=aHR0cHM6Ly9xaWl0YS11c2VyLWNvbnRlbnRzLmltZ2l4Lm5ldC9-dGV4dD9peGxpYj1yYi00LjEuMSZ3PTgzOCZoPTU4JnR4dD0lNDBhbHN6ZXJvMzIyJnR4dC1jb2xvcj0lMjMxRTIxMjEmdHh0LWZvbnQ9SGlyYWdpbm8lMjBTYW5zJTIwVzYmdHh0LXNpemU9MzYmdHh0LXBhZD0wJnM9ODU2ZDUwMjI1ZWFmOWE1NzZlMmI5NzkxNmM1ODc5NjI&blend-x=242&blend-y=480&blend-w=838&blend-h=46&blend-fit=crop&blend-crop=left%2Cbottom&blend-mode=normal&s=4d5b3f857ce05205165b39fa2e2b9ba6) og

og EN Right now, every AI model you've ever used works the same way. You talk, it listens. It responds, you listen. Thinking Machines is trying to change that by building a model that processes your input a

EN Right now, every AI model you've ever used works the same way. You talk, it listens. It responds, you listen. Thinking Machines is trying to change that by building a model that processes your input a

og

og EN arXiv:2605.08314v1 Announce Type: cross Abstract: SVD-based Low-rank compression reduces transformer parameters and nominal FLOPs, but these savings often translate poorly into real LLM serving speedu

EN arXiv:2605.08314v1 Announce Type: cross Abstract: SVD-based Low-rank compression reduces transformer parameters and nominal FLOPs, but these savings often translate poorly into real LLM serving speedu

og

og EN arXiv:2605.09070v1 Announce Type: cross Abstract: Many jailbreak attack research papers report attack success rates for a limited number of parameter settings, even though there are many combinations

EN arXiv:2605.09070v1 Announce Type: cross Abstract: Many jailbreak attack research papers report attack success rates for a limited number of parameter settings, even though there are many combinations

og

og EN arXiv:2605.09228v1 Announce Type: cross Abstract: Most LLM benchmarks score how well a model responds to explicit requests. They leave unmeasured a different conversational ability: noticing and actin

EN arXiv:2605.09228v1 Announce Type: cross Abstract: Most LLM benchmarks score how well a model responds to explicit requests. They leave unmeasured a different conversational ability: noticing and actin

og

og